Cortical Circuits That Mediate Visual Perception and Visually Guided Behavior

My laboratory studies cortical circuits that mediate visual perception and visually guided behavior. This work involves a creative fusion of the disciplines of neurophysiology, psychology, and computation. Monkeys are trained to perform demanding discrimination tasks, and we record from single or multiple neurons in visual cortex during performance of these tasks. This allows us to directly compare the ability of neurons to discriminate between different sensory stimuli with the ability of the behaving animal to make the same discrimination. This approach also allows us to examine the relationship between neuronal activity and perceptual decisions (independent of the physical stimulus). In addition, the techniques of electrical microstimulation and/or reversible inactivation are used to establish causal links between physiology and behavior. Computational modeling plays an important role in interpreting results and making predictions for future experiments.

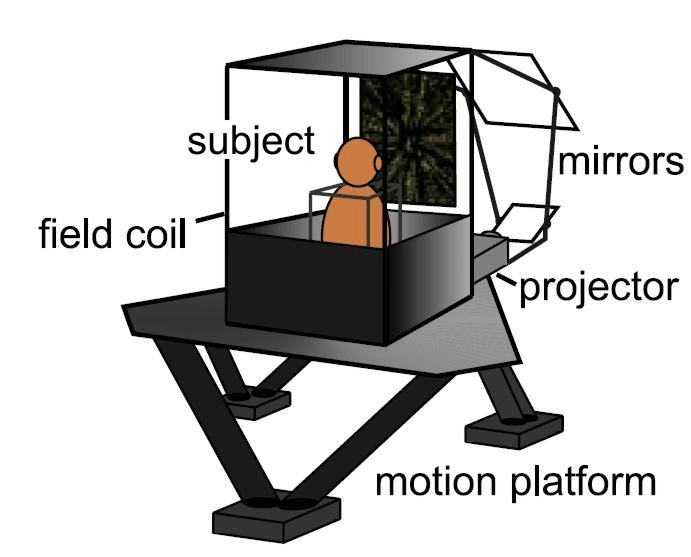

Virtual-reality motion system for studies of the neural basis of 3D spatial perception and navigation. the subject sits atop a 6-degree-of-freedom motion platform that can perform combined translations and rotations about any axis in 3D space. The subject views images of a virtual environment that are rear-projected onto the display screen and precisely synchronized with platform motion.

Our research currently has two main foci:

Neural mechanisms of depth perception. The image formed on each retina is a two-dimensional projection of the three-dimensional (3D) world. Objects at different depths project onto slightly disparate points on the two retinas, and the brain is able to extract these binocular disparities from the retinal images and construct a vivid sensation of depth. My lab studies the mechanisms by which binocular disparity information is encoded, processed, and read out by the brain in order to perceive depth and compute 3D surface structure. We are beginning to elucidate the brain areas that contribute to stereoscopic depth perception under specific task conditions, although much remains to be learned about this interesting cognitive process. We have also recently discovered a population of neurons that combines visual motion with eye movement signals to code depth from motion parallax, and future work will focus on how depth cues from disparity and motion parallax are integrated by neurons.

Sensory integration for perception of self-motion and object motion. To accurately perceive our own motion through space, and in turn the motion of objects relative to our own trajectory, we integrate information from the visual and vestibular systems. Integrating information across different sensory systems is a fundamental issue in systems neuroscience. Using a 3D virtual reality system to provide monkeys with naturalistic combinations of visual stimuli and inertial motion, we are studying how cortical neurons integrate visual and vestibular signals to compute one's direction of heading through 3D space. We are also exploring the neural computations by which the brain compensates for self-motion to judge the motion of objects in space. An overarching goal is to develop a detailed neurobiological account of Bayesian optimal cue integration.

Researcher: Greg DeAngelis, Ph.D.

Visual neuroscience; multi-sensory integration; neural coding; linking neurons to perception